CIRM VIRTUAL EVENT WEBPAGE

|

Speakers Felix Abramovich (Tel Aviv University) Pierre Neuvial (Université de Toulouse) Dominique Picard (Université Paris-Diderot Paris 7) Aaditya K. Ramdas (Carnegie Mellon University) Veronika Rockova (University of Chicago) Etienne Roquain (UPMC Paris) Saharon Rosset (Tel Aviv University) Chiara Sabatti (Stanford University) Joseph Salmon (Université de Montpellier) Richard Samworth (University of Cambridge) David Siegmund (Stanford University) Weijie Su (Wharton, University of Pennsylvania) Daniel Yekutieli (Tel Aviv University) Stefan Wager (Stanford University) Jonas Wallin (University of Lund) Hua Wang (Wharton, University of Pennsylvania) |

Mathematical Methods of Modern Statistics 2

Méthodes Mathématiques en Statistiques Modernes 2 CONFERENCE Organizing Committee Malgorzata Bogdan (Wrocław University) Scientific Committee Malgorzata Bogdan (Wrocław University) After the success of the first CIRM-Luminy meeting on Mathematical Methods of Modern Statistics (July 2017) we would like to continue the tradition. The title of the conference makes allusion to the famous book of Harald Cramèr Mathematical Methods of Statistics (1946) which is a landmark both for mathematics and statistics. The main objectives of the conference are to respond on the world highest statistical and mathematical level to:

(a) a strong need of reflection on the interactions between different branches of modern statistics and modern mathematics and of summarizing them (b) the actual scientific strategy of development of Data Sciences in France and abroad. We propose a wide spectrum of topics and general paradigms of modern statistics with deep mathematical implications. The major unifying topic is analysis of large dimensional data. The statistical inference based on such data is possible under certain assumptions on the structure of the underlying model, using different notions of model sparsity. The conference talks will give an overview of current knowledge on the modern statistical methods addressing this issue: including modern graphical models, different methods of multiple testing and model selection, regularization techniques and missing data treatments. These topics will be viewed both from the frequentist and Bayesian perspective, including modern nonparametric Bayes methods. |

Unfortunately, due to the unusual circumstances, we were forced to cancel the conference «

Mathematical Methods of Modern Statistics 2 » in its original format. We will nevertheless organize a virtual version of it.We will record the talks and will make them available on this webpage before June 15.

During the week which starts on June 15, we will organize virtual office hours in order to allow the participants to interact and discuss the content of the talks with their authors.

Best regards,

Małgorzata Bogdan, Ismael Castillo, Piotr Graczyk, Fabien Panloup,

Frédéric Proïa , Étienne Roquain

|

You can submit questions related to the pre-recorded talks here.

Discussion rooms will be available to registered conference participants via the link below. Your access code to the rooms is provided by the organizers.

This page is accessible with a password issued by the organizers.

|

|

DISCUSSION SESSIONS

|

MONDAY 15 JUNE

Session: high dimensional statistics I DAY ORGANISERS: |

TUESDAY 16 JUNE

Session: Modern statistics in practice DAY ORGANISER: |

WEDNESDAY 17 JUNE

Session: high dimensional statistics II DAY ORGANISER: |

THURSDAY 18 JUNE

Session: modern theory in statistics DAY ORGANISER: |

FRIDAY 19 JUNE

Session: selective inference DAY ORGANISER: |

|

11:30

|

–

|

–

|

–

|

Shrinkage estimation of mean for complex multivariate normal distribution with unknown covariance when p > n

By Yoshihiko Konno Chairman J. Wesolowski |

–

|

|

15:00

|

15:15

OPENING |

POSTER SESSION

Konrad Furmanczyk Johan Larsson Hideto Nakashima « La Bibliothèque » Discussion Room Chairman P. Graczyk |

POSTER SESSION

Guillaume Maillard Patrick Tardivel Eva-Maria Walz « La Bibliothèque » Discussion Room Chairman F. Proïa |

_

|

POSTER SESSION

Marie Perrot-Dockès Zhiqi Bu Deborah Sulem « La Bibliothèque » Discussion Room Chairman. F. Panloup |

|

15:30

|

High-dimensional, multiscale online changepoint detection

By Richard Samworth Chairman D.Siegmund Discussant S.Gerchinovitz |

Structure learning for CTBN’s – Bayesian inference

By Blazej Miasojedow Chairman E. Roquain |

High-dimensional classification by sparse logistic regression

By Felix Abramovich Chairman M. Bogdan |

Cholesky structures on matrices and their applications

By Hideyuki Ishi Chairman J. Wesolowski |

Optimal and Maximin Procedures for Multiple Testing Problems

By Saharon Rosset Chairman E. George |

|

16:00

|

The smoothed multivariate square-root Lasso: an optimization lens on concomitant estimation

By Joseph Salmon Chairman A. Molstad Discussant P. Tardivel |

Treatment effect estimation with missing attributes

By Julie Josse Chairman E. Roquain Discussant M. Escobar-Bach |

Scaling of scoring rules

By Jonas Wallin Chairman M. Bogdan Discussant B. Miasojedow |

Quasi logistic distributions and Gaussian scale mixing

By Gérard Letac Chairman J. Wesolowski |

Optimal control of false discovery criteria in the general two-group model

By Ruth Heller Chairman E. George |

|

16:30

|

De-biasing arbitrary convex regularizers and asymptotic normality

By Pierre Bellec Chairman M.Bogdan, Discussant P. Tardivel |

Isotonic Distributional Regression (IDR): Leveraging Monotonicity, Uniquely So !

By Tilmann Gneiting Chairman P. Graczyk Discussant J. Wallin |

Hierarchical Bayes Modeling for Large-Scale Inference

By Daniel Yekutieli Chairman E. Candes Discussant J. Rousseau |

How to estimate a density on a spider web ?

By Dominique Picard Chairman G. Letac |

Sparse multiple testing: can one estimate the null distribution?

By Etienne Roquain Chairman E. George |

|

17:00

|

COFFEE BREAK

|

COFFEE BREAK

|

COFFEE BREAK

|

COFFEE BREAK

|

COFFEE BREAK

|

|

17:30

|

Floodgate: Inference for Model-Free Variable Importance

By Lucas Janson Chairman E.Candes Discussant A. Weinstein |

Knockoff genotypes: value in counterfeit

By Chiara Sabatti Chairman J. Rousseau Discussant S. Robin |

High dimensional statistics

By Stefan Wager Chairman F. Abramovich Discussant F. Panloup |

17:30 – 18:30

JOINT SEMINAR Gaussian Differential Privacy Weijie Su the joint session with International Seminar on Selective Inference: https://www.selectiveinferenceseminar.com/ |

Post hoc bounds on false positives using reference families

By Pierre Neuvial Chairman M. Bogomolov |

|

18:00

|

The Price of Competition:Effect Size Heterogeneity Matters in High Dimensions!

By Hua Wang Chairman E. Candes Discussant Pragya Sur |

Change: Detection,Estimation, Segmentation

By David Siegmund Chairman R. Samworth Discussant K. Podgorski |

Bayesian Spatial Adaptation

By Veronika Rockova Chairman J. Rousseau Discussant E. George |

–

|

Universal inference using the split likelihood ratio test

By Aaditya Ramdas Chairman E. Roquain

|

|

19:00

|

–

|

–

|

–

|

18:45

Informal discussion with Julie Josse |

Séance de rattrapage

Consistent model selection criteria and goodness-of-fit test for common time series models By Jean-Marc Bardet Chairman P. Graczyk |

MONDAY 15 JUNE – Session: high dimensional statistics I

|

High-dimensional, multiscale online changepoint detection

By Richard J. Samworth

Abstract. We introduce a new method for high-dimensional, online changepoint detection in settings where a p-variate Gaussian data stream may undergo a change in mean. The procedure works by performing likelihood ratio tests against simple alternatives of different scales in each coordinate, and then aggregating test statistics across scales and coordinates.

The algorithm is online in the sense that its worst-case computational complexity per new observation, namely O(p2log(ep)), is independent of the number of previous observations; in practice, it may even be significantly faster than this. We prove that the patience, or average run length under the null, of our procedure is at least at the desired nominal level, and provide guarantees on its response delay under the alternative that depend on the sparsity of the vector of mean change. Simulations confirm the practical effectiveness of our proposal. De-biasing arbitrary convex regularizers and asymptotic normality

By Pierre C. Bellec

|

The smoothed multivariate square-root Lasso: an optimization lens on concomitant estimation

By Joseph Salmon

Abstract. In high dimensional sparse regression, pivotal estimators are estimators for which the optimal regularization parameter is independent of the noise level. The canonical pivotal estimator is the square-root Lasso, formulated along with its derivatives as a « non-smooth + non-smooth » optimization problem.

Modern techniques to solve these include smoothing the datafitting term, to benefit from fast efficient proximal algorithms. In this work we focus on minimax sup-norm convergence rates for non smoothed and smoothed, single task and multitask square-root Lasso-type estimators. We also provide some guidelines on how to set the smoothing hyperparameter, and illustrate on synthetic data the interest of such guidelines. This is joint work with Quentin Bertrand (INRIA), Mathurin Massias, Olivier Fercoq and Alexandre Gramfort. Floodgate: Inference for Model-Free Variable Importance

By Lucas Janson

Abstract. Many modern applications seek to understand the relationship between an outcome variable of interest and a high-dimensional set of covariates. Often the first question asked is which covariates are important in this relationship, but the immediate next question, which in fact subsumes the first, is \emph{how} important each covariate is in this relationship. In parametric regression this question is answered through confidence intervals on the parameters. But without making substantial assumptions about the relationship between the outcome and the covariates, it is unclear even how to \emph{measure} variable importance, and for most sensible choices even less clear how to provide inference for it under reasonable conditions. In this paper we propose \emph{floodgate}, a novel method to provide asymptotic inference for a scalar measure of variable importance which we argue has universal appeal, while assuming nothing but moment bounds about the relationship between the outcome and the covariates. We take a model-X approach and thus assume the covariate distribution is known, but extend floodgate to the setting that only a \emph{model} for the covariate distribution is known and also quantify its robustness to violations of the modeling assumptions. We demonstrate floodgate’s performance through extensive simulations and apply it to data from the UK Biobank to quantify the effects of genetic mutations on traits of interest.

|

|

The Price of Competition: Effect Size Heterogeneity Matters in

High Dimensions! By Hua Wang

|

Abstract. In high-dimensional regression, the number of explanatory variables with nonzero effects – often referred to as sparsity – is an important measure of the difficulty of the variable selection problem. As a complement to sparsity, this paper introduces a new measure termed effect size heterogeneity for a finer-grained understanding of the trade-off between type I and type II errorsor, equivalently, false and true positive rates using the Lasso. Roughly speaking, a regression coefficient vector has higher effect size heterogeneity than another vector (of the same sparsity) if the nonzero entries of the former are more heterogeneous than those of the latter in terms of magnitudes. From the perspective of this new measure, we prove that in a regime of linear sparsity, false and true positive rates achieve the optimal trade-off uniformly along the Lasso path when this measure is maximum in the sense that all nonzero effect sizes have very differentmagnitudes, and the worst-case trade-off is achieved when it is minimum in the sense that allnonzero effect sizes are about equal. Moreover, we demonstrate that the Lasso path produces anoptimal ranking of explanatory variables in terms of the rank of the first false variable when the effect size heterogeneity is maximum, and vice versa. Metaphorically, these two findings suggest that variables with comparable effect sizes—no matter how large they are—would compete with each other along the Lasso path, leading to an increased hardness of the variable selection problem. Our proofs use techniques from approximate message passing theory as well as a novel argument for estimating the rank of the first false variable.

|

TUESDAY 16 JUNE – Session: Modern statistics in practice

|

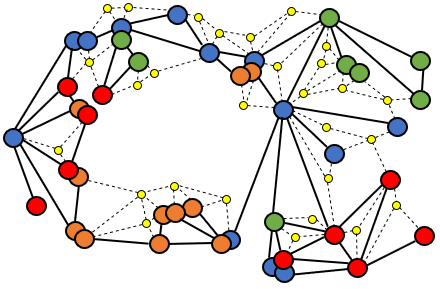

Structure learning for CTBN’s

By Blazej Miasojedow

Abstract. The continuous time Bayesian networks (CTBNs) represent a class of stochastic processes, which can be used to model complex phenomena, for instance, they can describe interactions occurring in living processes, in social science models or in medicine. The literature on this topic is usually focused on the case, when the dependence structure of a system is known and we are to determine conditional transition intensities (parameters of the network). In the paper, we study the structure learning problem, which is a more challenging task and the existing research on this topic is limited. The approach, which we propose, is based on a penalized likelihood method. We prove that our algorithm, under mild regularity conditions, recognizes the dependence structure of the graph with high probability. We also investigate the properties of the procedure in numerical

studies to demonstrate its effectiveness. |

Treatment effect estimation with missing attributes

By Julie Josse

Abstract. Inferring causal effects of a treatment or policy from observational data is central to many applications. However, state-of-the-art methods for causal inference suffer when covariates have missing values, which is ubiquitous in application.

Missing data greatly complicate causal analyses as they either require strong assumptions about the missing data generating mechanism or an adapted unconfoundedness hypothesis. In this talk, I will first provide a classification of existing methods according to the main underlying assumptions, which are based either on variants of the classical unconfoundedness assumption or relying on assumptions about the mechanism that generates the missing values. Then, I will present two recent contributions on this topic: (1) an extension of doubly robust estimators that allows handling of missing attributes, and (2) an approach to causal inference based on variational autoencoders adapted to incomplete data. I will illustrate the topic an an observational medical database which has heterogeneous data and a multilevel structure to assess the impact of the administration of a treatment on survival. |

|

Isotonic Distributional Regression (IDR) Leveraging

Monotonicity, Uniquely So! By Tilmann Gneiting

Abstract. There is an emerging consensus in the transdiciplinary literature that the ultimate goal of regression analysis is to model the conditional distribution of an outcome, given a set of explanatory variables or covariates. This new approach is called « distributional regression », and marks a clear break from the classical view of regression, which has focused on estimating a conditional mean or quantile only. Isotonic Distributional Regression (IDR) learns conditional distributions that are simultaneously optimal relative to comprehensive classes of relevant loss functions, subject to monotonicity constraints in terms of a partial order on the covariate space. This IDR solution is exactly computable and does not require approximations nor implementation choices, except for the selection of the partial order. Despite being an entirely generic technique, IDR is strongly competitive with state-of-the-art methods in a case study on probabilistic precipitation forecasts from a leading numerical weather prediction model.

Joint work with Alexander Henzi and Johanna F. Ziegel. |

Knockoff genotypes: value in counterfeit

By Chiara Sabatti

Abstract. The framework of knockoffs has been recently proposed to perform variable selection under rigorous type-I error control, without relying on strong modeling assumptions. We extend the methodology of knockoffs to a rich family of problems where the distribution of the covariates can be described by a hidden Markov model. We develop an exact and efficient algorithm to sample knockoff variables in this setting and then argue that, combined with the existing selective framework, this provides a natural and powerful tool for performing principled inference in genomewide association studies with guaranteed false discovery rate control. To handle the high level of dependence that can exist between SNPs in linkage disequilibrium, we propose a multi-resolution analysis, that simultaneously identifies loci of importance and provides results analogous to those obtained in fine mapping.

This is joint work with Matteo Sesia, Eugene Katsevich, Stephen Bates and Emmanuel Candes. |

By David O. Siegmund

|

|

The maximum score statistic is used to detect and estimate changes in the level, slope, or other local feature of a sequance of observations, and to segment the sequence xhen there appear to be multiple changes. Control of false positive errors when observations are auto-correlated is achieved by using a first order autoregressive model. True changes in level or slope can lead to badly biased estimates of the autoregressive parameter and variance, which can result in a loss of power. Modifications of the natural estimators to deal with this difficulty are partially successful. Applications to temperature time series, atmospheric CO2 levels, COVID-19 incidence, excess deaths, copy number variations, and weather extremes illustrate the general theory.

This is joint research with Xiao Fang. |

WEDNESDAY 17 JUNE – Session: high dimensional statistics II

|

High-dimensional classification by sparse logistic regression

By Felix Abramovich

Abstract. In this talk we consider high-dimensional classification. We discuss first high-dimensional binary classification by sparse logistic regression, propose a model/feature selection procedure based on penalized maximum likelihood with a complexity penalty on the model size and derive the non-asymptotic bounds for the resulting misclassification excess risk. Implementation of any complexity penalty-based criterion, however, requires a combinatorial search over all possible models. To find a model selection procedure computationally feasible for high-dimensional data, we consider logistic Lasso and Slope classifiers and show that they also achieve the optimal rate. We extend further the proposed approach to multiclass classification by sparse multinomial logistic regression.

This is joint work with Vadim Grinshtein and Tomer Levy. |

Scaling of scoring rules

By Jonas Wallin

Abstract. Averages of proper scoring rules are often used to rank probabilistic forecasts. In many cases, the individual observations and their predictive distributions in these averages have variable scale (variance). I will show that some of the most popular proper scoring rules, such as the continuous ranked probability score (CRPS), up-weight observations with large uncertainty which can lead to unintuitive rankings. We have developed a new scoring rule, scaled CRPS (SCRPS), this new proper scoring rule is locally scale invariant and therefore works in the case of varying

uncertainty. I will demostrate this how this affects model selection through parameter estimation in spatial statitics. |

|

Hierarchical Bayes Modeling for Large-Scale Inference

By Daniel Yekutieli

Abstract. We present a novel theoretical framework for statistical analysis of Large-scale problems that builds on the Robbins compound decision approach. We present a hierarchical Bayesian approach for implementing this framework and illustrate its application to simulated data.

|

Experimenting in Equilibrium

By Stefan Wager

Abstract. Classical approaches to experimental design assume that intervening on one unit does not affect other units. There are many important settings, however, where this non-interference assumption does not hold, e.g., when running experiments on supply-side incentives on a ride-sharing platform or subsidies in an energy marketplace. In this paper, we introduce a new approach to experimental design in large-scale stochastic systems with considerable cross-unit interference, under an assumption that the interference is structured enough that it can be captured using mean-field asymptotics. Our approach enables us to accurately estimate the effect of small changes to system parameters by combining unobstrusive randomization with light-weight modeling, all while remaining in equilibrium. We can then use these estimates to optimize the system by gradient descent. Concretely, we focus on the problem of a platform that seeks to optimize supply-side payments p in a centralized marketplace where different suppliers interact via their effects on the overall supply-demand equilibrium, and show that our approach enables the platform to optimize p based on perturbations whose magnitude can get vanishingly small in large systems.

|

By Veronika Ročková

|

|

This talk addresses the following question: “Can regression trees do what other machine learning methods cannot?” To answer this question, we consider the problem of estimating regression functions with spatial inhomogeneities. Many real life applications involve functions that exhibit a variety of shapes including jump discontinuities or high-frequency oscillations. Unfortunately, the overwhelming majority of existing asymptotic minimaxity theory (for density or regression function estimation) is predicated on homogeneous smoothness assumptions which are inadequate for such data. Focusing on locally Holder functions, we provide locally adaptive posterior concentration rate results under the supremum loss. These results certify that trees can adapt to local smoothness by uniformly achieving the point-wise (near) minimax rate. Such results were previously unavailable for regression trees (forests). Going further, we construct locally adaptive credible bands whose width depends on local smoothness and which achieve uniform coverage under local self-similarity. Unlike many other machine learning methods, Bayesian regression trees thus provide valid uncertainty quantification. To highlight the benefits of trees, we show that Gaussian processes cannot adapt to local smoothness by showing lower bound results under a global estimation loss. Bayesian regression trees are thus uniquely suited for estimation and uncertainty quantification of spatially inhomogeneous functions.

|

THURSDAY 18 JUNE – Session: modern theory in statistics

|

Shrinkage estimation of mean for complex multivariate normal distribution with unknown covariance when p > n

By Yoshiko Konno

Abstract. We consider the problem of estimating the mean vector of the multivariate complex normaldistribution with unknown covariance matrix under an invariant loss function when the samplesize is smaller than the dimension of the mean vector. Following the approach of Chételat and Wells (2012, Ann.Statist, p. 3137-3160), we show that a modification of Baranchik-tpye estimatorsbeats the MLE if it satisfies certain conditions. Based on this result, we propose the James-Stein-like shrinkage and its positive-part estimators.

|

Cholesky structures on matrices and their applications

By Hideyuki Ishi

Abstract. As a generalization of fill-in free property of a sparse positive definite real symmetric matrix with respect to the Cholesky decomposition, we introduce a notion of (quasi-)Cholesky structure for a real vector space of symmetric matrices. The cone of positive definite symmetric matrices in a vector space with a quasi-Cholesky structure admits explicit calculations and rich analysis similar to the ones for Gaussian selsction model associated to a decomposable graph. In particular, we can apply our method to a decomposable graphical model with a vertex pemutation symmetry.

|

|

Quasi logistic distributions and Gaussian scale

By Gérard Letac

Abstract. A quasi logistic distribution on the real line has density proportional to (coshx+cosa)−1(coshx+cosa)−1 if V>0V>0 and ZZ with standard normal law are independent, we say that V‾‾√V has a quasi Kolmogorov distribution if ZV‾‾√ZV is quasi logistic. We study the numerous properties of these generalizations of the logistic and Kolmogorov laws.

|

How to estimate a density on a spider web?

By Dominique Picard

|

|

Consistent model selection criteria and goodness-of-fit test for common time series models

By Jean-Marc Bardet

|

Abstract. We study the model selection problem in a large class of causal time series models, which includes both the ARMA

or AR(∞∞) processes, as well as the GARCH or ARCH(∞∞), APARCH, ARMA-GARCH and many others processes. To tackle this issue, we consider a penalized contrast based on the quasi-likelihood of the model. We provide sufficient conditions for the penalty term to ensure the consistency of the proposed procedure as well as the consistency and the asymptotic normality of the quasi-maximum likelihood estimator of the chosen model. We also propose a tool for diagnosing the goodness-of-fit of the chosen model based on a Portmanteau test. Monte-Carlo experiments and numerical applications on illustrative examples are performed to highlight the obtained asymptotic results. Moreover, using a data-driven choice of the penalty, they show the practical efficiency of this new model selection procedure and Portemanteau test. |

Abstract: Privacy-preserving data analysis has been put on a firm mathematical foundation since the introduction of differential privacy (DP) in 2006. This privacy definition, however, has some well-known weaknesses: notably, it does not tightly handle composition. In this talk, we propose a relaxation of DP that we term « f-DP », which has a number of appealing properties and avoids some of the difficulties associated with prior relaxations. First, f-DP preserves the hypothesis testing interpretation of differential privacy, which makes its guarantees easily interpretable. It allows for lossless reasoning about composition and post-processing, and notably, a direct way to analyze privacy amplification by subsampling. We define a canonical single-parameter family of definitions within our class that is termed « Gaussian Differential Privacy », based on hypothesis testing of two shifted normal distributions. We prove that this family is focal to f-DP by introducing a central limit theorem, which shows that the privacy guarantees of any hypothesis-testing based definition of privacy (including differential privacy) converge to Gaussian differential privacy in the limit under composition. This central limit theorem also gives a tractable analysis tool. We demonstrate the use of the tools we develop by giving an improved analysis of the privacy guarantees of noisy stochastic gradient descent. This is joint work with Jinshuo Dong and Aaron Roth.

Relevant paper

(Seminar hosted jointly with the ISSI=International Seminar on Selective Inference). https://www.selectiveinferenceseminar.com/

FRIDAY 19 JUNE – Session: selective inference

|

Optimal and maximin procedures for multiple testing problems

By Saharon Rosset

Abstract. Multiple testing problems are a staple of modern statistics. The fundamental objective is to reject as many false null hypotheses as possible, subject to controlling an overall measure of false discovery, like family-wise error rate (FWER) or false discovery rate (FDR). We formulate multiple testing of simple hypotheses as an infinite-dimensional optimization problem, seeking the most powerful rejection policy which guarantees strong control of the selected measure. We show that for exchangeable hypotheses, for FWER or FDR and relevant notions of power, these problems lead to infinite programs that can provably be solved. We explore maximin rules for complex alternatives, and show they can be found in practice, leading to improved practical procedures compared to existing alternatives. We derive explicit optimal tests for FWER or FDR control for three independent normal means. We find that the power gain over natural competitors is substantial in all settings examined. We apply our optimal maximin rule to subgroup analyses in systematic reviews from the Cochrane library, leading to an increased number of findings compared to existing alternatives.

|

Optimal control of false discovery criteria in the general

two-group model By Ruth Heller

Abstract. The highly influential two-group model in testing a large number of statistical hypotheses assumes that the test statistics are drawn independently from a mixture of a high probability null distribution and a low probability alternative. Optimal control of the marginal false discovery rate (mFDR), in the sense that it provides maximal power (expected true discoveries) subject to mFDR control, is known to be achieved by thresholding the local false discovery rate (locFDR), i.e., the probability of the hypothesis being null given the set of test statistics, with a fixed threshold.

We address the challenge of controlling optimally the popular false discovery rate (FDR) or positive FDR (pFDR) rather than mFDR in the general two-group model, which also allows for dependence between the test statistics. These criteria are less conservative than the mFDR criterion, so they make more rejections in expectation. We derive their optimal multiple testing (OMT) policies, which turn out to be thresholding the locFDR with a threshold that is a function of the entire set of statistics. We develop an efficient algorithm for finding these policies, and use it for problems with thousands of hypotheses. We illustrate these procedures on gene expression studies. |

|

Sparse multiple testing: can one estimate the null distribution?

By Etienne Roquain |

Post hoc bounds on false positives using reference families

By Pierre Neuvial |

|

Abstract. When performing multiple testing, adjusting the distribution of the null hypotheses is ubiquitous in applications. However, the effect of such an operation remains largely unknown, especially in terms of false discovery proportion (FDP) and true discovery proportion (TDP). In this talk, we explore this issue in the most classical case where the null distributions are Gaussian with an

unknown rescaling parameters (mean and variance) and where the Benjamini-Hochberg (BH) procedure is applied after a datarescaling step. |

Abstract. We present confidence bounds on the false positives contained in subsets of selected null hypotheses. As the coverage probability holds simultaneously over all possible subsets, these bounds can be applied to an arbitrary number of possibly datadriven subsets.

These bounds are built via a two-step approach. First, build a family of candidate rejection subsets together with associated bounds, holding uniformly on the number of false positives they contain (call this a reference family). Then, interpolate from this reference family to find a bound valid for any subset. This general program is exemplified for two particular types of reference families: (i) when the bounds are fixed and the subsets are p-value level sets (ii) when the subsets are fixed, spatially structured and the bounds are estimated. |

|

|

Universal inference using the split likelihood ratio test

By Aaditya K. Ramdas Abstract. We propose a general method for constructing confidence sets and hypothesis tests that have finite-sample guarantees without regularity conditions. We refer to such procedures as “universal.” The method is very simple and is based on a modified version of the usual likelihood ratio statistic, that we call “the split likelihood ratio test” (split LRT) statistic. The (limiting) null distribution of the classical likelihood ratio statistic is often intractable when used to test composite null hypotheses in irregular statistical models. Our method is especially appealing for statistical inference in these complex setups. The method we suggest works for any parametric model and also for some nonparametric models, as long as computing a maximum likelihood estimator (MLE) is feasible under the null. Canonical examples arise in mixture modeling and shape-constrained inference, for which constructing tests and confidence sets has been notoriously difficult. We also develop various extensions of our basic methods. We show that in settings when computing the MLE is hard, for the purpose of constructing valid tests and intervals, it is sufficient to upper bound the maximum likelihood. We investigate some conditions under which our methods yield valid inferences under model-misspecification. Further, the split LRT can be used with profile likelihoods to deal with nuisance parameters, and it can also be run sequentially to yield anytime-valid p-values and confidence sequences. Finally, when combined with the method of sieves, it can be used to perform model selection with nested model classes.

|

- Chapter markers and keywords to watch the parts of your choice in the video

- Videos enriched with abstracts, bibliographies, Mathematics Subject Classification

- Multi-criteria search by author, title, tags, mathematical area